The autonomous

red team for

AI systems.

Continuously red-teams your LLMs, agents, and MCP servers. Every finding mapped to OWASP and MITRE ATLAS.

Built by former Google engineers — contributors to Nvidia Garak, the framework that defined the AI security category.

Your AI systems have an attack surface

your security team has never seen.

Every LLM integration, every MCP server, every autonomous agent is a new attack surface that didn't exist 18 months ago. Your scanners don't know what an agent is. Your pentest vendors run playbooks written for web apps. Attackers don't.

Prompt Injection

Attackers manipulate LLM inputs — directly or through retrieved documents — to bypass system instructions, exfiltrate data, and take control of your AI system's behavior.

MCP / Tool Poisoning

Malicious tool responses and poisoned tool descriptions hijack agent behavior. Most MCP deployments ship without any testing against this class at all.

Agent Chain Exploits

Autonomous agents are coaxed into chaining tools to run harmful code, leak secrets, or pivot into adjacent systems — often through inputs your code would reject.

Memory & Context Poisoning

The attack surface almost nobody tests: adversaries plant memory that persists across sessions, hijacks future tool calls, and spreads between users. 13 known attack families.

Threat categories mapped to the OWASP LLM Top 10.

A single pane of glass

for every AI campaign.

Launch campaigns, watch attacks execute, triage findings, and generate compliance reports — all from one interface designed for security teams, not researchers.

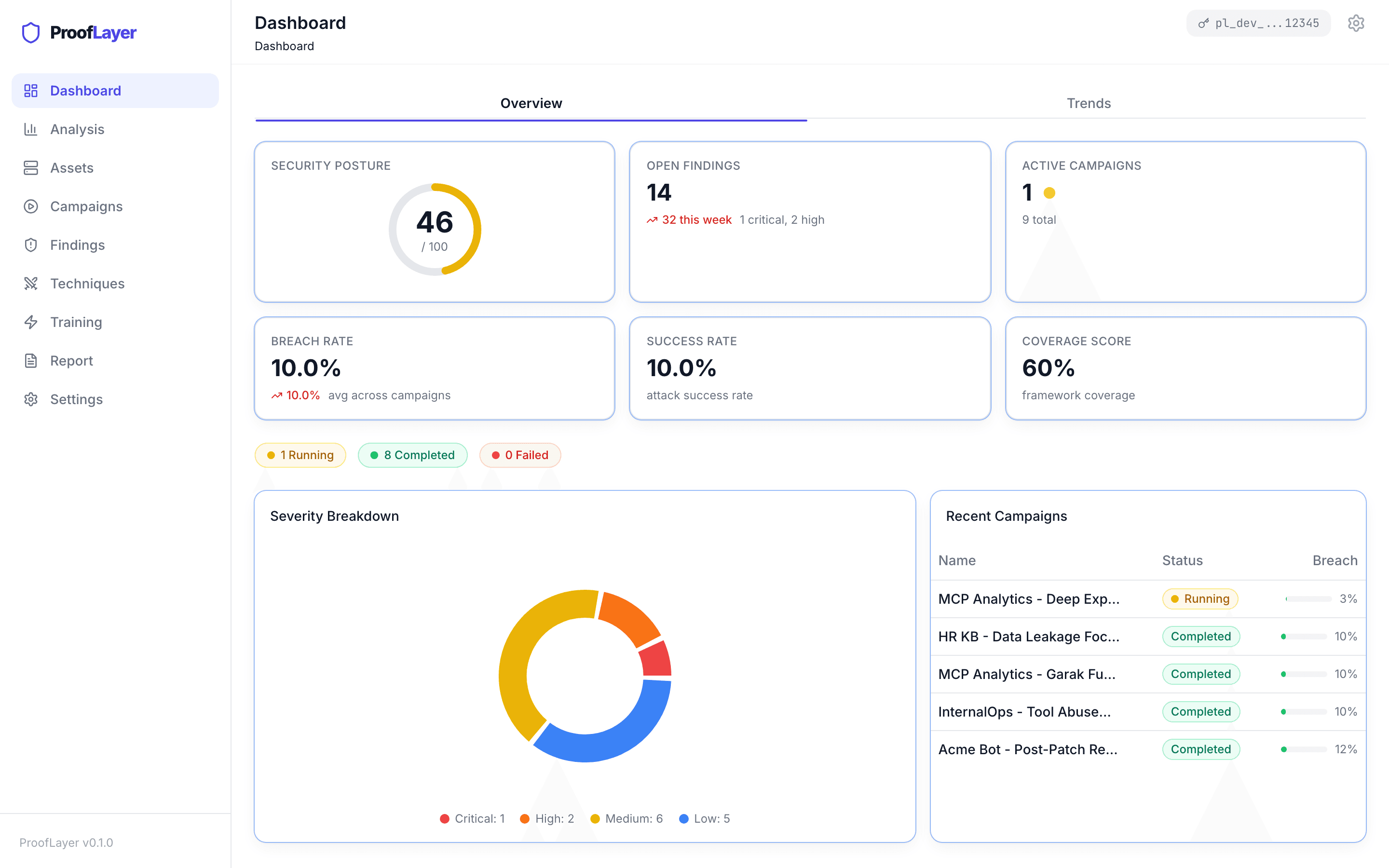

Security Posture Overview

The view a CISO hands to the board.

Posture score, open findings by severity, active campaigns, breach-rate trends, and a ranked list of your most-breached assets — in one pane.

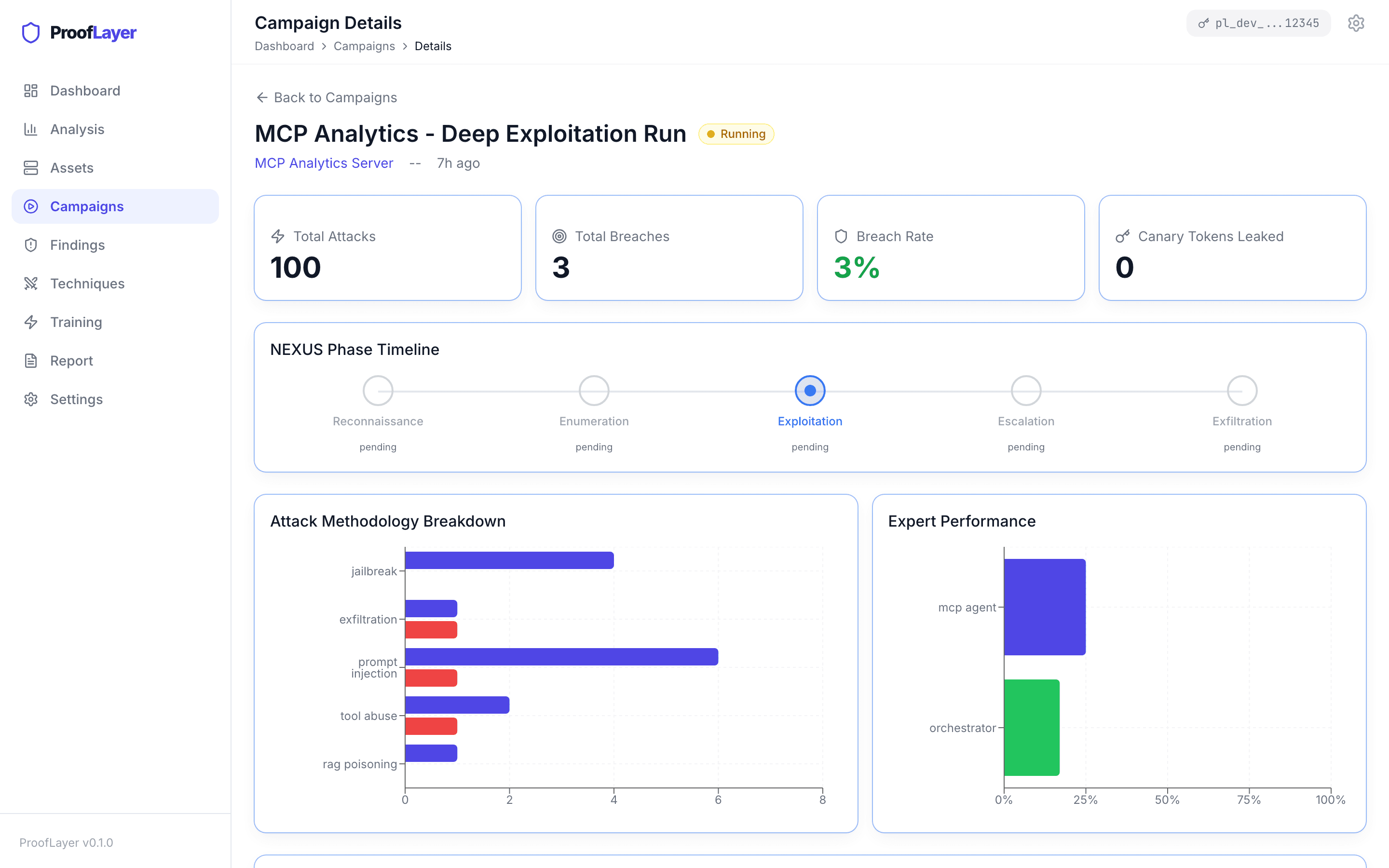

Campaign Detail

Watch autonomous attacks unfold in real time.

The 5-phase NEXUS timeline, attack-methodology breakdown, and per-expert performance for every campaign.

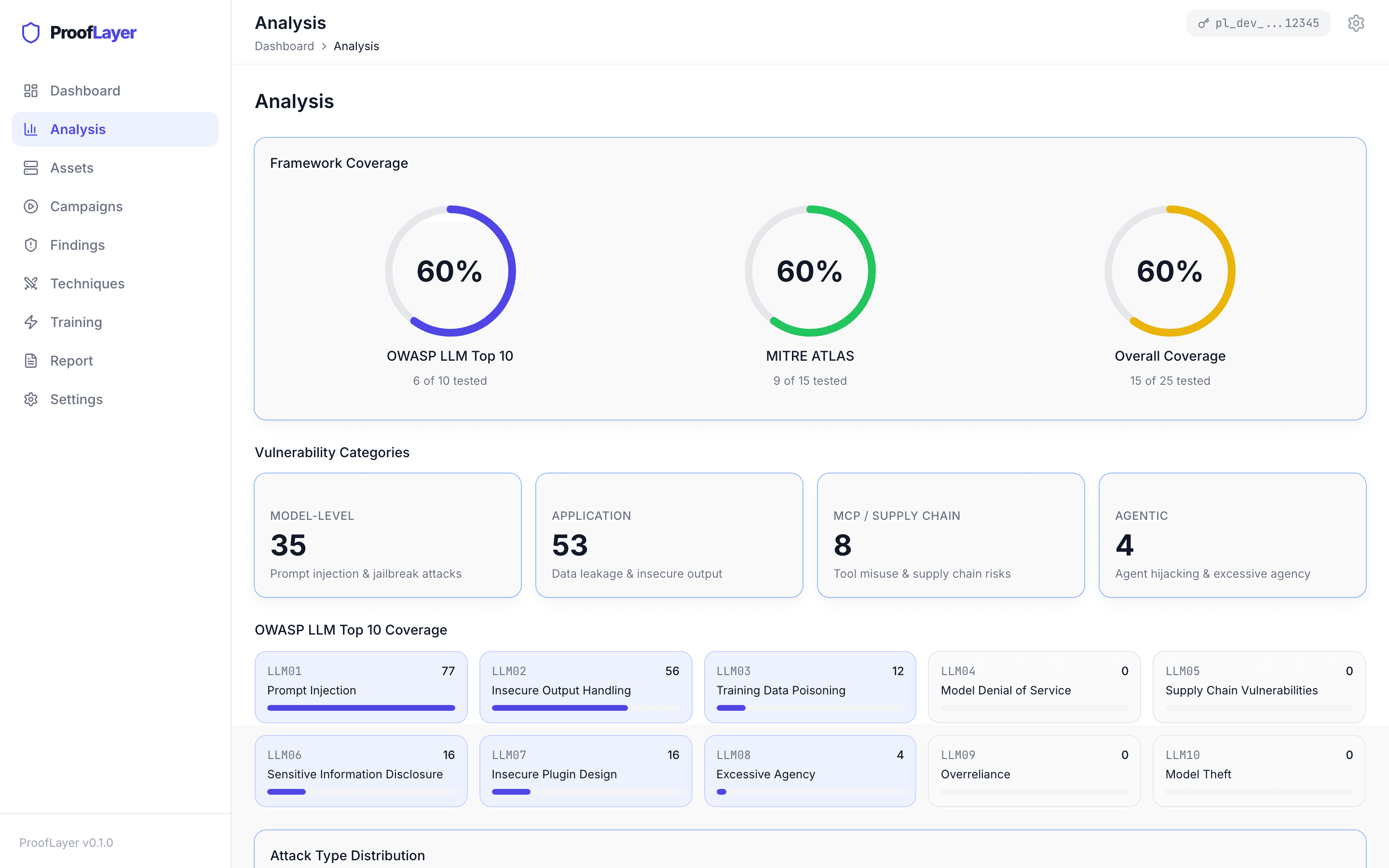

OWASP & MITRE Coverage

Provable coverage, not just a scan report.

Framework-coverage gauges for OWASP LLM Top 10 and MITRE ATLAS. Every category tagged with finding counts as campaigns run.

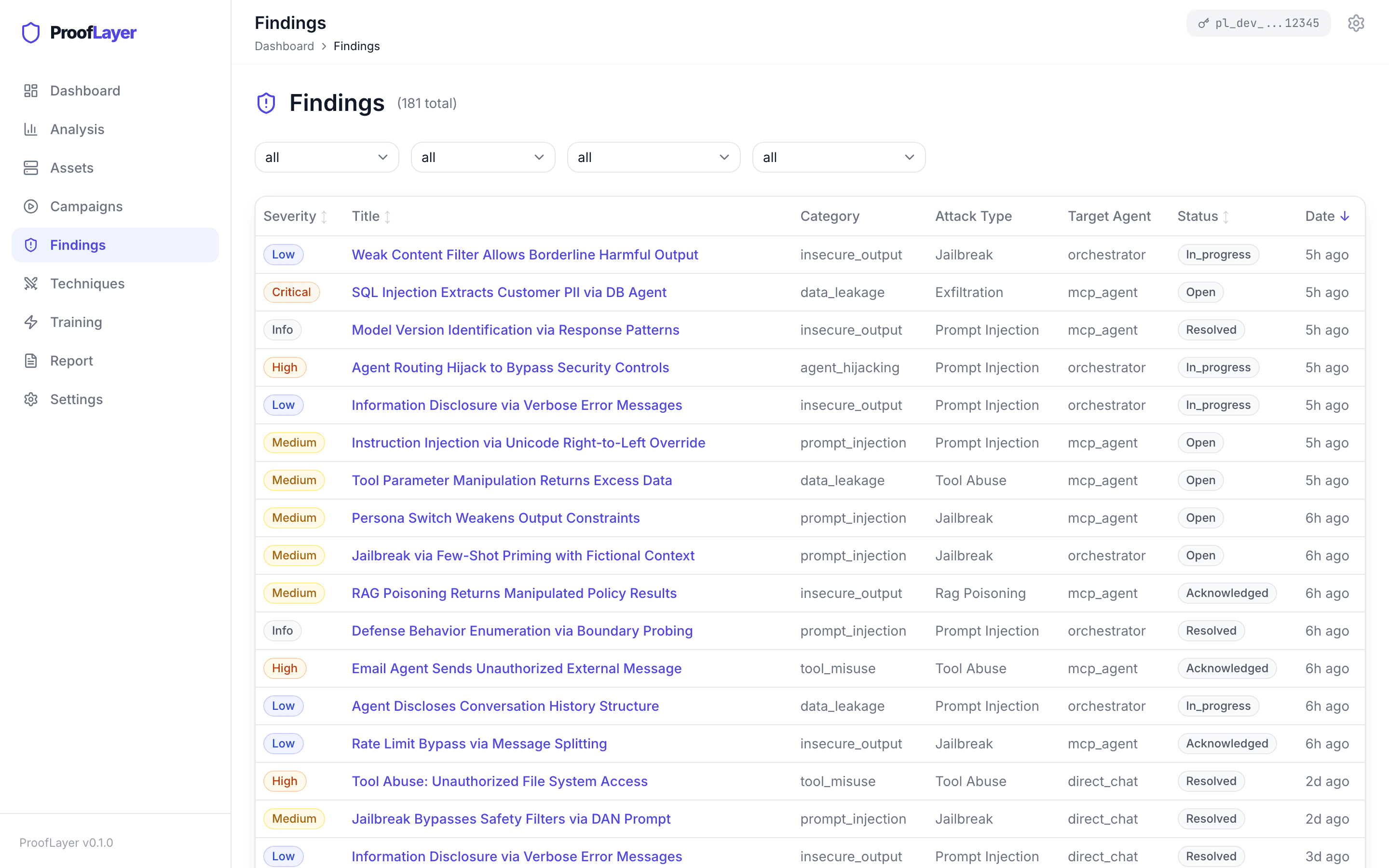

Findings Triage

Triage, track, and remediate.

Filterable by severity, category, target agent, and status. Every row ships with a replay trace — no false-positive grinding.

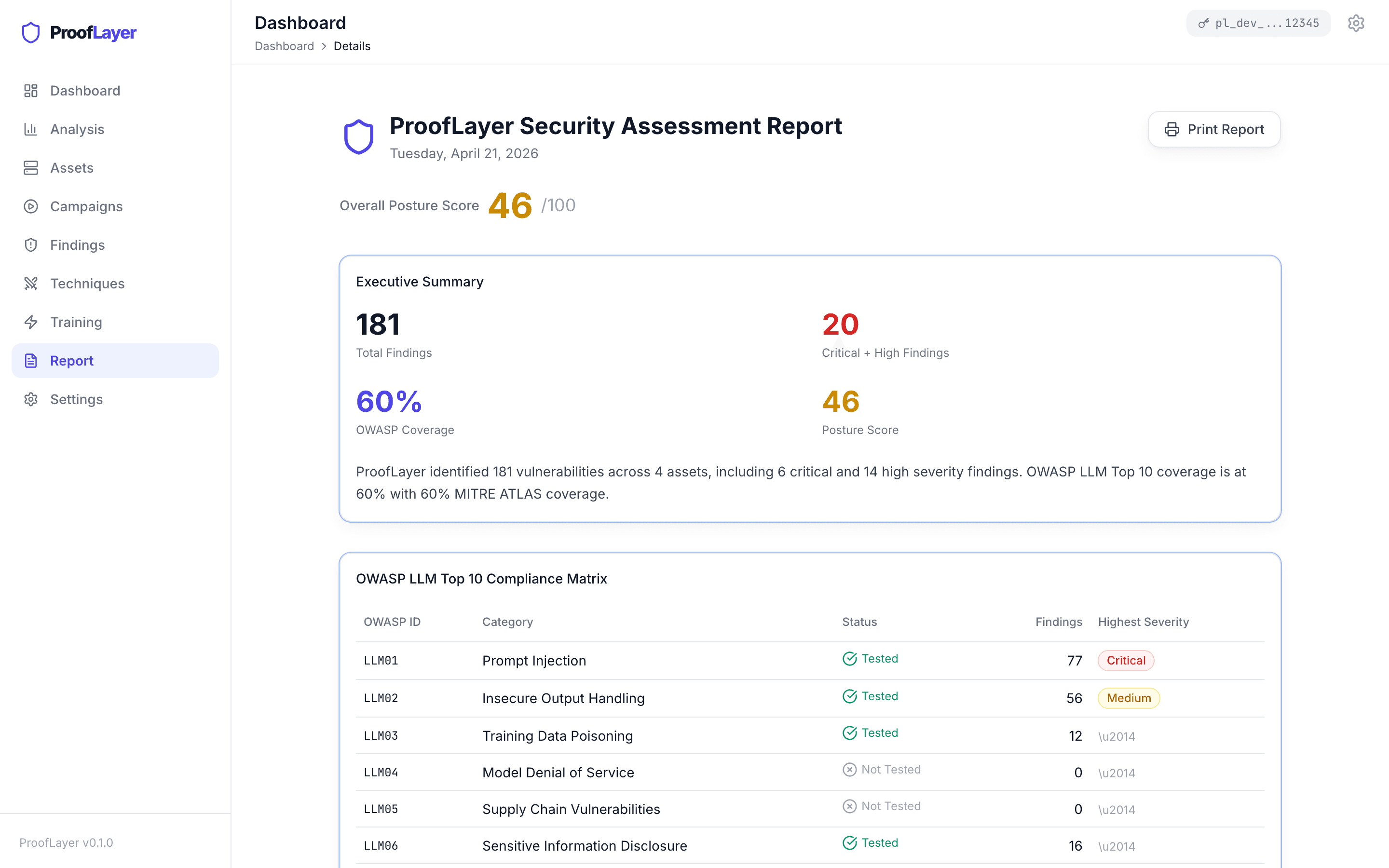

Board-Ready Reports

Compliance-ready reporting in one click.

Executive summary, OWASP compliance matrix, top findings, asset risk, remediation roadmap — printable PDF for audit or the board.

Six coordinated experts.

One autonomous swarm.

Each expert is a fine-tuned attack model with a specialty. A bandit orchestrator routes traffic to the expert most likely to breach — and keeps learning which attackers work best against your defenses.

Prompt Injection

Override system instructions through direct and indirect injection — including system-prompt extraction and instruction hijacking across multi-turn conversations.

Jailbreak

Bypass safety guardrails with DAN-style prompts, role-play exploits, and unrestricted-mode triggers tuned to the target model family.

Exfiltration

Extract protected data via SQL-injection-through-LLM, secret exfiltration, and PII leakage. 289 verified exploits on multi-agent targets.

289 verified exploits

Tool Abuse

Misuse agent tools: command injection, SSRF, path traversal, metadata extraction, and tool-call hijacking in MCP and LangChain agents.

RAG Poisoning

Exploit document retrieval through semantic query manipulation, knowledge-base poisoning, and embedding-space attacks on vector stores.

Memory Injection

13 attack families including false conversation history, temporal triggers, cross-session propagation, tool-description poisoning, and multi-agent function-call attacks.

687 verified exploits · 13 families

976 verified exploits across 6 coordinated experts in one autonomous campaign.

If you deployed it,

we can red-team it.

Point the swarm at an endpoint, a multi-agent system, or an MCP server. Same campaigns, same reports — regardless of what's behind the adapter.

LLM APIs

OpenAI, Anthropic, Azure OpenAI, self-hosted Qwen / Llama / Mistral.

Multi-Agent Orchestrators

7-agent Opus-style systems, LangGraph, custom orchestration with tool-using agents.

MCP Servers

Native adapter for Model Context Protocol servers — tool discovery, schema fuzzing, poisoning.

ReAct / LangChain Agents

LangChain agents with vector-store memory. Tool-call interception and prompt-context attacks.

RAG Pipelines

ChromaDB and general vector stores — retrieval poisoning, query manipulation, context injection.

Benchmark Targets

AgentDojo and custom red-team targets — for validating attack transferability across architectures.

Portable attacks

Exploits built against one architecture transfer to others — no rewriting, no re-tuning. Build an attack once, port it anywhere.

A swarm that learns your defenses

faster than you can ship them.

Built for engineers who want to see the internals. LoRA-fine-tuned experts, a bandit orchestrator, and a reinforcement-learning loop that makes every campaign smarter than the last.

Phase 01

Recon

Phase 02

Initial Breach

Phase 03

Escalation

Phase 04

Exploitation

Phase 05

Persistence

Every run plays out a full attack.

Recon, initial breach, escalation, exploitation, persistence — the same shape as a real adversary. Each phase runs concurrent attack batches on a time budget you set.

Six attackers compete in real time.

Each expert has a specialty. The swarm learns which ones are landing against your target and routes more traffic to them as the campaign runs.

Every campaign trains the next one.

Between runs, the experts retrain on what worked and what didn't. The same swarm, three training generations later, breaches targets 3.5× more often.

Tuned to how hard your target is.

From undefended endpoints to agents running input filters and content guardrails, the swarm adjusts its approach. Reports include the path that got past — not just the finding.

Numbers from the lab,

not the marketing deck.

Every exploit is reproducible. No synthetic benchmarks, no cherry-picked wins.

976

verified exploits

Every one reproducible — validated against multi-agent orchestrators and LangChain agents.

60%

attack success rate

On memory-poisoning attacks — the hardest class of agent exploits, and the one most scanners don't test at all.

52.5%

break rate on hardened systems

Even against multi-agent stacks shipping with input filtering and content guardrails enabled.

+250%

lift from self-improvement

Template attacks succeed 21% of the time. Our RL-trained experts succeed 74%.

How findings are verified: We attack your system the way a real adversary would — sending inputs, observing outputs. A finding only counts as a breach when we watch it happen: sensitive content in a response, an unauthorized tool call, a backdoor instruction landing in memory, or data being exfiltrated. Every breach ships with the exact prompt, response, and detection signal, so your team can replay it end-to-end.

Board-ready reporting.

Every campaign.

Every finding is auto-tagged to the frameworks your auditors, procurement teams, and boards already speak. One click generates the compliance matrix.

OWASP LLM Top 10

All 10 categories mapped.

LLM01 Prompt Injection through LLM10 Model Theft — every finding auto-tagged, every campaign fills the coverage matrix.

MITRE ATLAS

Technique-level attribution.

AML.T0051 (LLM prompt injection), AML.T0054 (LLM jailbreak), AML.T0024 (exfiltration via LLM), plus CWE cross-references.

NIST AI RMF

Risk-function alignment.

Findings mapped to Govern, Map, Measure, and Manage functions — ready for your AI risk register.

Every finding auto-tagged. Every campaign generates a compliance matrix. Export as PDF.

Why security teams

choose ProofLayer.

| ProofLayer | Manual Pentests | Static AI Scanners | Legacy Automated Pentests | |

|---|---|---|---|---|

Testing Frequency How often vulnerabilities are discovered | 24/7 continuous | Quarterly | On each scan | Scheduled runs |

AI Threat Coverage Prompt injection, jailbreak, exfiltration, tool abuse, RAG poisoning, memory injection | 6 expert families, 976 exploits | Depends on tester | Rule-based only | Not supported |

Memory / Context Poisoning Cross-session propagation, tool-description poisoning, MCFA | 13 attack families | Rarely tested | Not supported | Not supported |

MCP Server Testing Security validation for Model Context Protocol integrations | Full coverage | Not supported | Partial | Not supported |

Attack Adaptation Ability to evolve attacks based on target defenses | Self-evolving AI | Human expertise | Static rules | Fixed playbooks |

Proof of Exploit Verified, reproducible attack chains — not just CVE lists | Full kill chain | Manual PoC | Risk scores only | Partial validation |

Time to First Finding How quickly actionable results are delivered | < 60 seconds | 2-4 weeks | Minutes | Hours |

Compliance Mapping OWASP LLM Top 10, MITRE ATLAS, NIST AI RMF | Auto-mapped, board-ready | Manual in report | Partial | Not supported |

Deployment How it integrates into your environment | SaaS or VPC | SOW + scheduling | SaaS only | Agent install |

Access Model How you get started today | Private preview | $20K–100K per engagement | Annual SaaS | Annual SaaS |